Prioritization should not happen in a meeting where the loudest voice wins. Here's a better process that uses data.

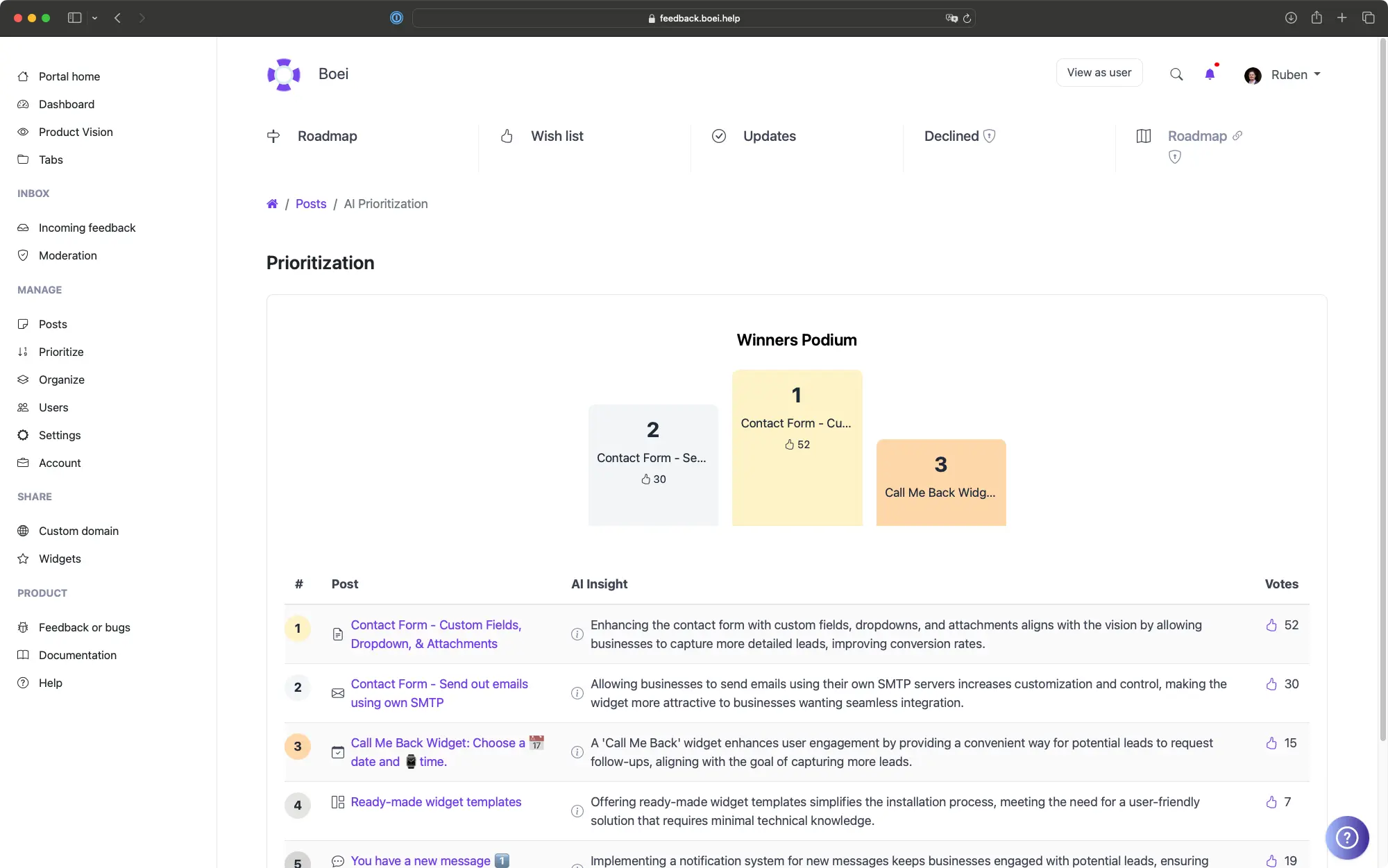

Step 1: Let AI score your backlog. Run AI Prioritization against your Product Vision. This gives you a ranked list in minutes. Review the top 20 and bottom 20 to calibrate whether AI is capturing your intent correctly.

Step 2: Layer in revenue data. Sort by Total Voter MRR. Are your highest-paying customers asking for something AI ranked low? That is worth investigating. Revenue data doesn't override strategic alignment, but it adds an important signal.

Step 3: Apply a scoring framework. Use RICE or ICE on your top 30 candidates. This forces your team to estimate effort and impact explicitly instead of relying on gut feeling.

Step 4: Filter by User Segments. Use segments to see what percentage of Enterprise customers vs. Starter plan customers want each feature. A feature requested by 60% of your Enterprise segment is different from one requested by 60% of your free tier.

Step 5: Save your analysis. Use saved queries in ProductLift to save filtered views for recurring analysis. Create views like "High MRR requests this quarter" or "Top voted unplanned items" so you can revisit them without rebuilding the filter each time.